Recently, I was chatting with a friend of mine about pre-acquisition due diligence. Charlie O’Rourke is one of the most seasoned technical executives I know. He’s been doing hardcore technology for over 30 years and is one of the pivotal brains behind WU/FDC’s multi-billion dollar payment processing platforms. The conversation revolved around a method he uses for identifying processing bottlenecks.

His thesis statement was that in a world where you need to spend as little as you can on an acquisition and still turn a profit quickly, problems of poor algorithmic implementations are “a good thing to have” because they are relatively easy to identify and fix. This is true, assuming that you have a grasp of large-volume transactional systems and you are handy with complex algorithms.

In today’s environment of rapid system assembly via the mashing of frameworks and off-the-shelf functionality like CRM or ERP, younger developers’ mastery of data structures is almost unheard of.

It’s true; most developers will probably never write an algorithm from scratch. But sooner or later, every coder will have to either implement or maintain a routine that has some algorithmic functionality. Unfortunately, when it comes to efficiency, you can’t afford to make uninformed decisions, as even the smallest error in choosing an algorithm can send your application screaming in agony to Valhalla.

So, if you have been suffering from recursive algorithmic nightmares or have never fully understood the concept of algorithmic efficiency (or plan to interview for a position on my team), here is a short and concise primer on the subject.

First, let’s start with definitions.

Best or Bust:

An important principal to remember when selecting algorithms is that there is no such thing as the “best algorithm” for all problems. Efficiency will vary with data set size and availability of computational resources (memory and processor). What is trivial in terms of processing power for the NSA, could be prohibitive for the average company.

Efficiency:

Algorithmic efficiency is the measure of how well a routine can perform a computational task. One analogy for algorithmic efficiency and its dependence on hardware (memory capacity and processor speed) is the task of moving a ton of bricks from point A to point B a mile away. If you use a Lamborghini for this job (small storage but fast acceleration), you will be able to move a small amount of bricks very quickly, but the downside is that you will have to repeat the trip multiple times. On the other hand, if you use a flatbed truck (large storage but slow acceleration), you will be able to complete the entire project in a single run, albeit at a slower pace.

Notation:

The expression for algorithmic efficiency is commonly referred to as “Big O” notation. This is a mathematical representation of how the algorithm grows over time. When plotted as a function, algorithms will remain flat, grow steadily over time, or follow varying curves.

The Pessimistic Nature of Algorithms:

In the world of algorithm analysis, we always assume the worst-case scenario. For example, if you have an unsorted list of unique numbers and it will take your routine an hour to go through it, then it is possible in the best-case scenario that you could find your value on the first try (taking only a minute). However, following the worst-case scenario theory, your number could end up being the last one on the list (taking you 60 minutes to find it). When we look at efficiency, it’s necessary to assume the worst-case scenario.

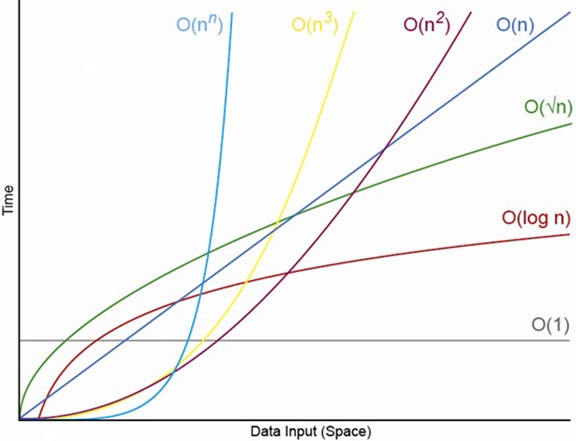

Image 1: Sample Performance Plots of Various Algorithms

O(1)

Performance is constant for time (processor utilization) or space (memory utilization) regardless of the size of the data set size. When viewed on a graph, these functions show no-growth curve and remain flat.

O(1) algorithm’s performance is also independent of the size of the data set on which it operates.

An example of this algorithm is testing a value of a variable based on some pre defined hash table. The single lookup involved in this operation eliminates any growth curves.

O(n)

Performance will grow linearly and in direct proportion to the size of the input data set. The algorithm’s performance is directly related to the size of the data set processed.

O(2N) or O(10 + 5N) denotes that some specific business logic has been blended with the implementation (which should be avoided if possible).

O(N+M) is another way of saying that two data sets are involved and that their combined size determines performance.

An example of this algorithm is finding an item in an unsorted list or a Linear Search that goes down a list, one item at a time, without jumping. The time taken to search the list gets bigger at the same rate as the list does.

O(nn)

The performance will be directly proportional to the square of the size of the input data set. This happens when the algorithm processes each element of a set, and that processing requires another pass through the set (this is the square value). Processing a lot of inner loops will also result in the form O(N3), O(N4), O(Nn.).

Examples of this type of algorithm are Bubble Sort, Shell Sort, Quicksort, Selection Sort or Insertion Sort.

O(2N)

Processing growth (data set size and time) will double with each additional element of the input data set. The execution time of O(2N) can grow exponentially.

The 2 indicates that time or memory doubles for each new element in the data set. In reality, these types of algorithms do not scale well unless you have a lot of fancy hardware.

O(log n) and O(n log n)

Processing is iterative, and growth curves peak at the beginning of the execution and then slowly tap off as the size of the data sets increases. For example, if a data set contains 10 items, it will take one second to complete; if it contains 100 items, it will take two seconds; if the data set contains 1000 items, it will take three seconds, and so on. Doubling the size of the input data set has little effect on its growth because, after each iteration, the data set will be halved. This makes O(log n) algorithms very efficient when dealing large data sets.

Generally, log N implies log2N, which refers to the number of times you can partition a data set in half, then partition the halves, and so on. For example, for a data set with 1024 elements, you would perform 10 lookups (log21024 = 10) before either finding your value or running out of data.

| Lookup # | Initial Dataset | New Dataset |

| 1 | 1024 | 512 |

| 2 | 512 | 256 |

| 3 | 256 | 128 |

| 4 | 128 | 64 |

| 5 | 64 | 32 |

| 6 | 32 | 16 |

| 7 | 16 | 8 |

| 8 | 8 | 4 |

| 9 | 4 | 2 |

| 10 | 2 | 1 |

A good illustration of this principal can be found in the Binary Search, it works by selecting the middle element of the data set and comparing it against the desired value to see if it matches. If the target value is higher than the value of the selected element, it will select the upper half of the data set and perform the comparison again. If the target value is lower than the value of the selected element, it will perform the operation against the lower half of the data set. The algorithm will continue to halve the data set with each search iteration until it finds the desired value or until it exhausts the data set.

The important thing to note about log2N type algorithms is that they grow slowly. Doubling N has a minor effect on its performance and the logarithmic curves flatten out smoothly.

An example of these types of algorithms are Binary Search, Heap sort, Quicksort, or Merge Sort

Scalability and Efficiency

An O(1) algorithm scales better than an O(log N),

which scales better than an O(N),

which scales better than an O(N log N),

which scales better than an O(N2),

which scales better than an O(2N).

Scalability does not equal efficiency. A well-coded, O(N2) algorithm can outperform a poorly-coded O(N log N) algorithm, but this is only true for certain data set sizes and processing time. At one point, the performance curves of the two algorithms will cross and their efficiency will reverse.

What to Watch for When Choosing an Algorithm

The most common mistake when choosing an algorithm is the belief that an algorithm that was used successfully on a small data set will scale effectively to large data sets (factor 10x, 100x, etc.).

For most given situations, an O(N2) algorithm like Bubble Sort will work well. If you switch to a more complex O(N log N) algorithm like Quicksort, you are likely to spend a long time refactoring your code and will only realize marginal performance gains.

More Resources

For a great illustration of various sorting algorithms in live action form, check out David R. Martin’s animated demo. For more informal coverage of algorithms, check out Donald Knuth’s epic publication on the subject The Art of Computer Programming, Volumes 1-4.

If you are looking for some entertainment while learning the subject, check out AlgoRythimic’s series on sorting through dancing.

© Copyright 2011 Yaacov Apelbaum All Rights Reserved.

Hi Yaacov, Awesome blog!

Complexity killed the cat!!!

Thanks for the crash course on Big-O Notation. It’s a nice summarized view.

You are welcome!